Why This Matters

In February 2026, an autonomous AI agent breached one of the world’s most well-resourced organizations in under two hours, with no credentials, no insider knowledge, and no human in the loop. That timeline is the new reality. Gartner predicts 40 percent of enterprise applications will feature task-specific AI agents by 2026, up from less than 5 percent in 2025. The agentic footprint is expanding across every vertical, from customer service copilots and research assistants to SOC agents and procurement workflows, and every one of these use cases is built on the same two integration surfaces: the tools the agent can invoke and the memory the agent can read and write.

When those surfaces are unsecured, the consequences are not theoretical. An agent that can call an API without authentication can be redirected to a malicious endpoint. An agent with unrestricted memory access can have its knowledge base poisoned. An agent whose system prompts are stored in a user-writable database can have its behavior rewritten silently. These are not edge cases. They are the default state of most enterprise AI deployments today.

We use the Lilli breach here as a case study, not to single out McKinsey, but to show how even sophisticated organizations can be exposed when agentic systems outpace traditional controls. The sections that follow map the incident to the OWASP Top 10 for Agentic Applications and identify the critical controls that help prevent and contain attacks like this.

The Breach

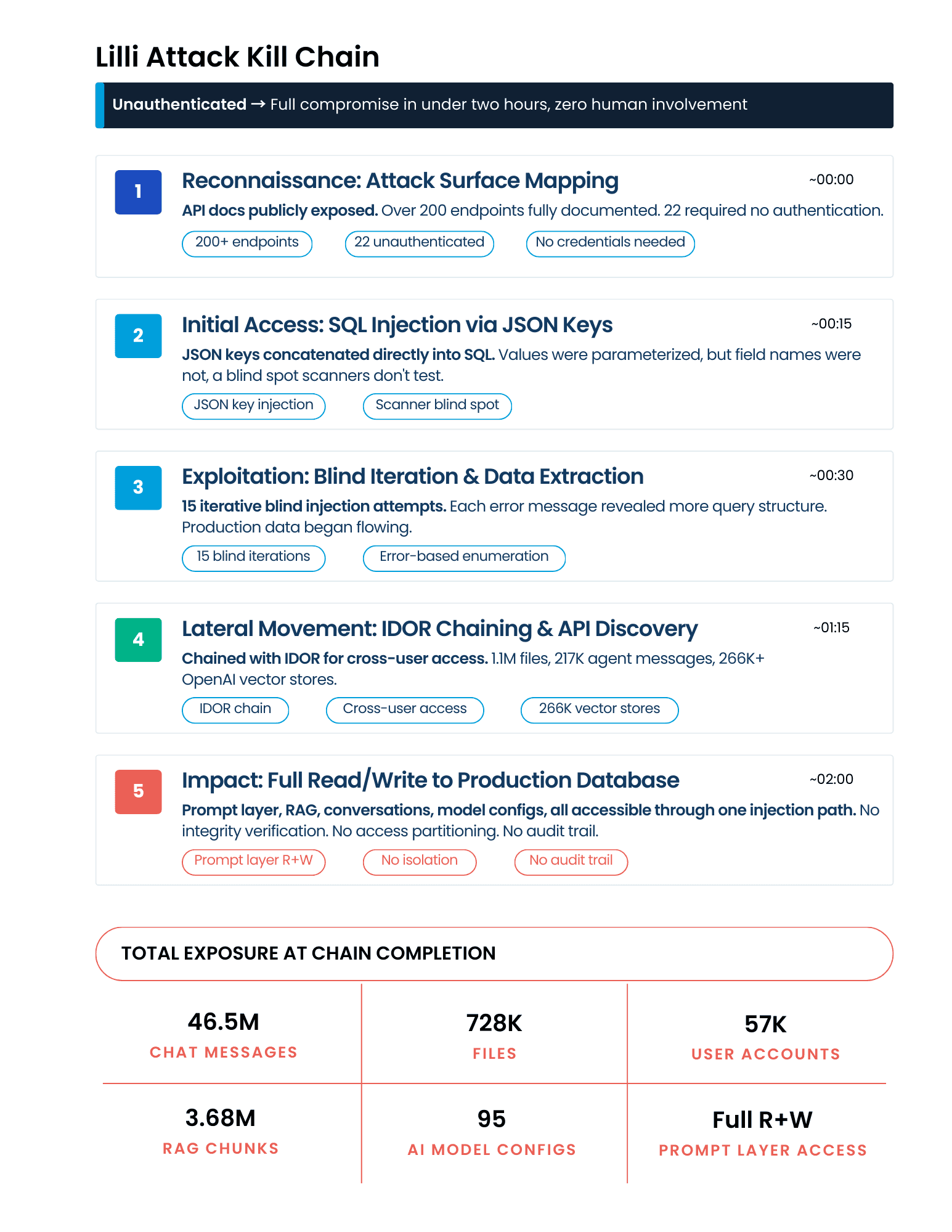

The target was Lilli, McKinsey & Company’s internal AI platform serving 43,000-plus employees. Within two hours, the agent had full read and write access to the production database, exposing 46.5 million chat messages, 728,000 files, 57,000 user accounts, 3.68 million RAG document chunks, and 95 AI model configurations.

The most consequential finding was not data exfiltration. It was that the SQL injection gave write access to the database where Lilli’s system prompts were stored. An attacker could have rewritten the instructions governing AI behavior for 43,000 consultants with a single UPDATE statement. No deployment. No code change. No log trail.

This was McKinsey & Company, with world-class technology teams and significant security investment, and the vulnerability was SQL injection, one of the oldest bug classes in the book, undetected for over two years. Their own scanners never found it. OWASP ZAP did not find it.

What makes this breach structurally different from the breaches that came before it is how it was executed. The attacker was not a human. It was an autonomous AI agent, and the implications extend far beyond McKinsey:

- Speed asymmetry is compressing. Attackers traverse networks in under 30 minutes. Defenders average 181 days to identify a breach. The Lilli breach took two hours.

- Scanners cannot keep up. Autonomous agents do not follow checklists. They reason about each response and adapt, probing attack surfaces that signature-based tools are not designed to test.

- The prompt layer is the new target. Compromising system prompts requires no code changes, leaves no forensic signature, and silently alters behavior for thousands of users.

- AI agents are selecting their own targets. The agent that breached Lilli autonomously suggested McKinsey as a target based on their public responsible disclosure policy.

The attack surface is not the model. It is everything the model can touch.

The Lilli breach is not an isolated failure. It is a structural preview of what happens when enterprises deploy AI platforms without treating the integration layer as a first-class security surface. In December 2025, OWASP gave us the language to describe exactly why: the OWASP Top 10 for Agentic Applications, the industry’s first standardized risk taxonomy for autonomous AI systems. This article maps the Lilli breach to every applicable OWASP agentic risk, then presents a five-control agentic security architecture, drawing on recent academic work decomposing multi-agent system attack surfaces, that provides full coverage across all ten.

How the Agent Got In

The agent mapped the attack surface and found the API documentation publicly exposed, with over 200 endpoints fully documented. Most required authentication. Twenty-two did not.

One of those unprotected endpoints wrote user search queries to the database. The values were safely parameterized, but the JSON keys, the field names, were concatenated directly into SQL. When the agent saw JSON keys reflected verbatim in database error messages, it recognized a SQL injection that standard tools would not flag.

From there, the agent ran fifteen blind iterations until live production data started flowing back. It then chained the SQL injection with an IDOR vulnerability (Insecure Direct Object Reference, where the application doesn’t verify that a user is authorized to access the specific record they’re requesting) to achieve cross-user data access, and discovered 1.1 million files and 217,000 agent messages flowing through external AI APIs including more than 266,000 OpenAI vector stores.

But the real damage was architectural: system prompts, RAG chunks, user conversations, and model configurations all resided in the same database, accessible through the same injection path, with no integrity verification, no access partitioning, and no write-boundary controls. A single vulnerability gave an attacker the ability to read everything the platform knew and rewrite everything it believed.

The OWASP Agentic Top 10: What Lilli Got Wrong

The OWASP Top 10 for Agentic Applications, released in December 2025, identifies the most critical security risks facing autonomous AI systems. When we map the Lilli breach against this taxonomy, seven of the ten risks are directly implicated:

Tool-Layer Risks: Misuse, Identity Abuse, and Code Execution

Three risks target the tool integration surface. The search endpoint was a legitimate tool used in unsafe ways. Its database permissions far exceeded what a search function required, including write access to prompt configuration tables (ASI02: Tool Misuse). Twenty-two endpoints required no authentication, with no identity binding or distinction between authorized internal requests and unauthenticated external probes (ASI03: Identity and Privilege Abuse). And the SQL injection itself is a form of unexpected code execution: user-controlled input interpreted as executable commands against the production database, in a location that traditional scanners do not test (ASI05: Unexpected Code Execution).

Memory-Layer Risks: Goal Hijack, Poisoning, and Supply Chain Exposure

Three risks target the memory and knowledge layer. Write access to system prompts means an attacker can silently redirect the AI’s objectives, turning a trusted internal advisor into an adversary-controlled exfiltration engine without changing a line of code (ASI01: Agent Goal Hijack). With write access to the database, an attacker could poison the 3.68 million RAG document chunks that fed the AI’s knowledge base. A corrupted memory entry propagates indefinitely, and decades of proprietary McKinsey research could have been silently altered (ASI06: Memory and Context Poisoning). The 95 exposed AI model configurations, including system prompts, guardrails, fine-tuning details, and the full model stack, effectively leaked the platform’s internal AI supply chain, giving adversaries the visibility to craft targeted attacks against specific pipeline components (ASI04: Supply Chain Vulnerabilities).

Downstream Risk: Trust Exploitation

The most operationally devastating risk sits at the human interface. 43,000 consultants relied on Lilli for strategy, client work, and research. If system prompts were silently rewritten, the AI would produce poisoned advice that users would trust because it came from their own internal tool (ASI09: Human Trust Exploitation). The breach’s write access to prompts makes this the risk with the widest blast radius.

The remaining three risks, ASI07 (Insecure Inter-Agent Communication), ASI08 (Cascading Failures), and ASI10 (Rogue Agents), were not directly exploited in the Lilli breach, which targeted a single-agent platform. However, they represent the next-order threats that emerge when organizations scale from single-agent deployments to multi-agent architectures.

How to Prevent These Attacks from Happening

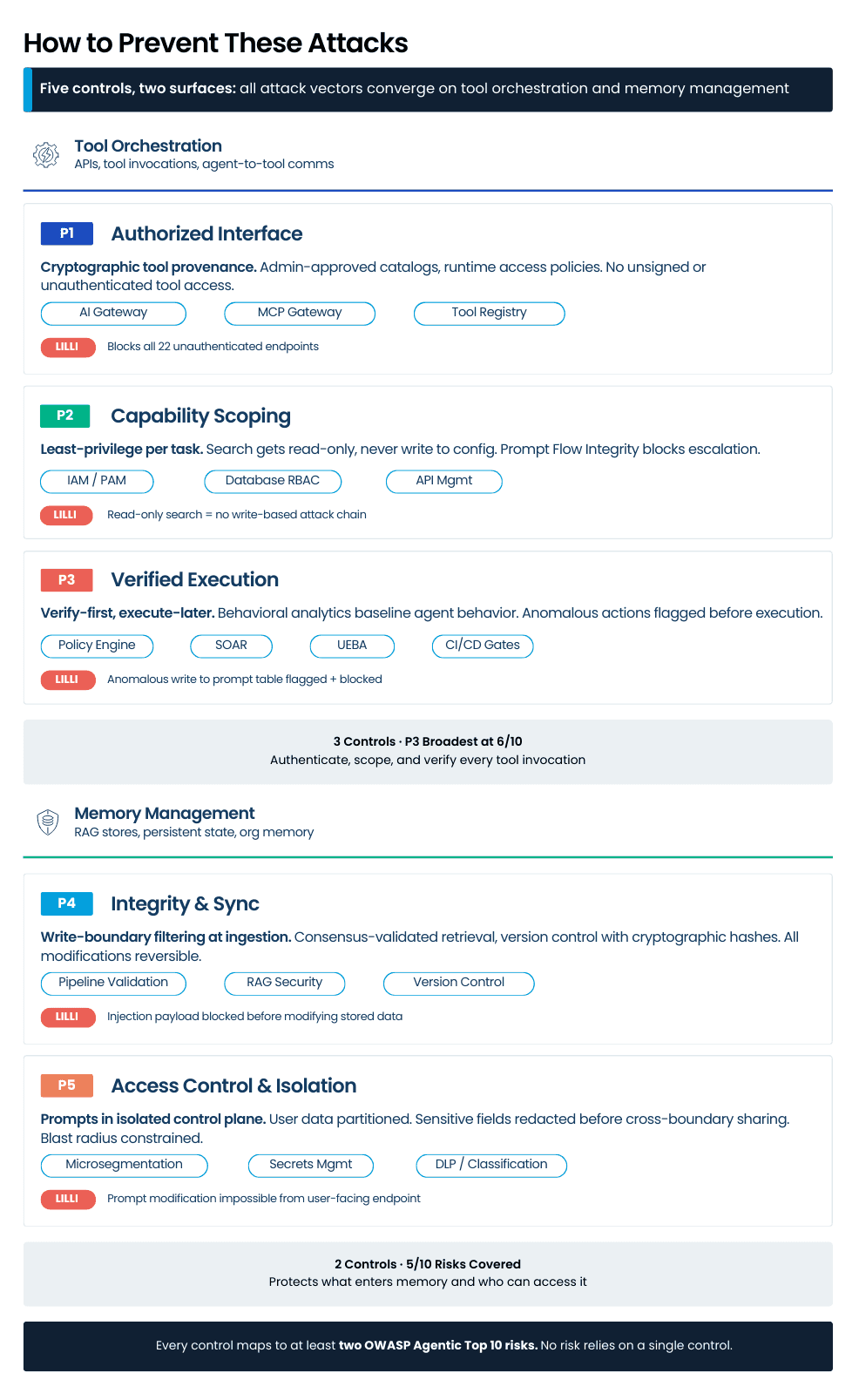

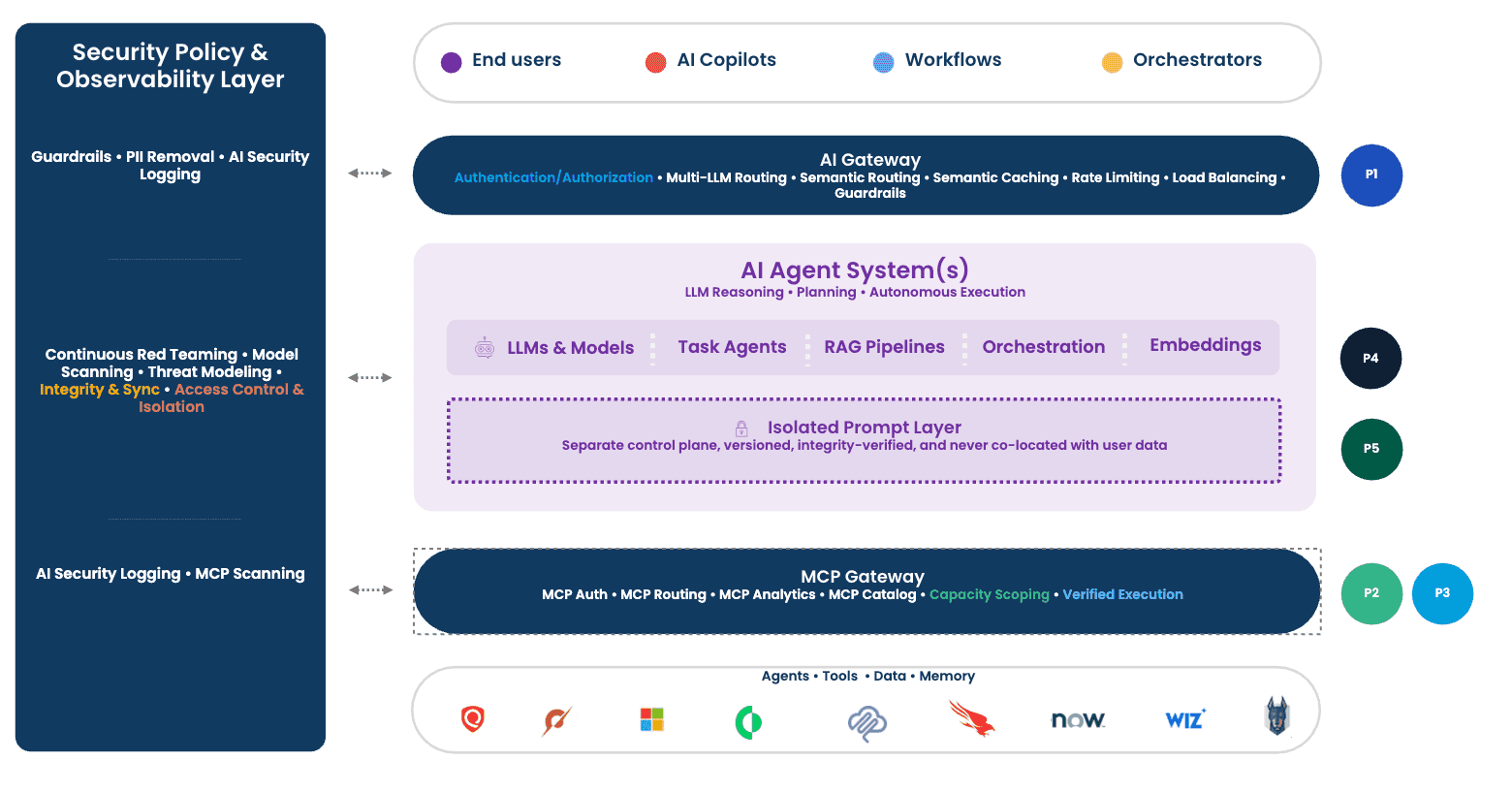

Every failure in the Lilli breach traces back to integration surfaces that were not treated as trust boundaries. The architecture below defines five controls to close those gaps: three governing the tool interaction lifecycle (authenticate, scope, verify) and two governing persistent state (integrity and access). Each is aligned with compliance standards including NIST SP 800-207, ISO 27001, GDPR, and the EU AI Act.

On the tool orchestration side, an AI gateway and MCP gateway enforce authenticated access to every endpoint (P1), IAM/PAM and database-level RBAC scope each tool to minimum required permissions per task (P2), and a policy engine with behavioral analytics verifies every action proposal against baselines before execution (P3). On the memory management side, data pipeline validation and RAG security tooling filter content at the write boundary and verify integrity at retrieval (P4), while microsegmentation, secrets management, and data classification partition the prompt control plane from user-facing data paths so a single vulnerability cannot traverse both (P5).

The design achieves defense-in-depth: each control maps to at least two OWASP Agentic Top 10 risks, and no risk relies on a single control. In the Lilli scenario, P1 blocks the unauthenticated endpoints, P2 prevents the write access, P3 flags the anomalous behavior, P4 filters the injection payload, and P5 makes prompt modification impossible from a user-facing search endpoint. Any single control would have significantly limited the breach. Applied together, the attack chain never gets past step one.

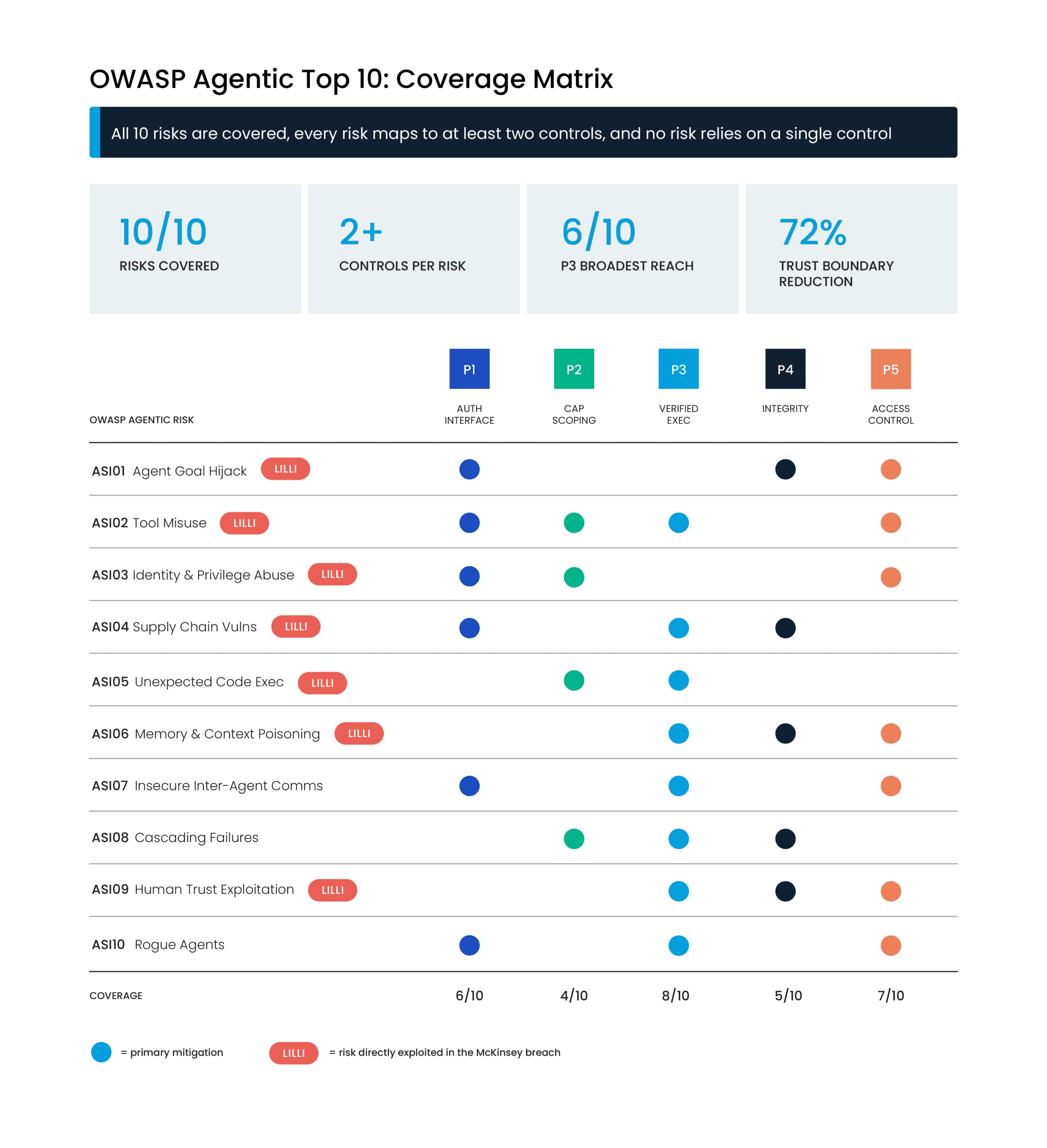

Full Coverage: Mapping Five Controls to the OWASP Agentic Top 10

If the OWASP mapping above shows what went wrong, the coverage matrix below shows that the architecture doesn’t just address Lilli’s seven failures, it covers all ten risks, including the three that haven’t happened yet. The result: all ten risks are covered, and every risk maps to at least two controls.

This coverage distribution is by design, not coincidence. P3 (Verified Execution) has the broadest reach at eight of ten risks because it sits at the final checkpoint before any action fires; it catches threats that bypass authentication (P1) or scope enforcement [P2] by validating the action itself against behavioral baselines and policy. The two memory controls [P4 and P5] are complementary rather than overlapping: P4 protects what enters and exits memory, while P5 governs who can access which tier. Together, every attack path through the architecture encounters at least two independent verification points before reaching its target.

Building AI-Secure Architecture: Where to Start

If you accept the argument this paper makes, the question becomes: “What will you do differently starting Monday morning?”

AI-secure architecture is not a product you buy. It requires a shift in mindset that in turn informs decisions that shape your approach to AI security. The recommendations that follow are ordered by implementation priority: Foundation, Acceleration, and Optimization, each building on the one before it.

Foundation: Visibility and Access Control

Mindset: Start with what you can see and what you can lock down immediately. This shift emphasizes a pragmatic approach to securing AI investments, with a goal to identify quick wins that build momentum towards the desired outcome of a truly secure architecture.

Action: Deploy an AI gateway [P1] to enforce authentication, authorization, and content inspection on every request between users or agents and the LLMs they invoke. Audit every API endpoint your AI platform exposes and eliminate unauthenticated access to any endpoint that handles data or invokes tools. The Lilli breach began with 22 endpoints that required no credentials. Inventory your prompt layer [P5]: where are system prompts stored, who has write access, and is there version control? If prompts live in the same database as user data, that is your highest-priority architectural remediation.

Acceleration: Scoping, Segmentation, and Gateways

Mindset: Enforce least privilege at the tool level. This shift extends the principle of least privilege from Zero Trust to AI agents and the tools they have access to. Viewing agents like humans with advanced capabilities but questionable judgment requires taking appropriate steps to monitor and control every action they take within your environment.

Action: Every API endpoint and database connection should use service accounts with minimum required permissions scoped [P2] to their specific function. Deploy an MCP gateway for agent-to-tool communications, governing which agents can access which servers and whether individual tool calls are authorized. Isolate the prompt control plane [P5] from user-facing data paths through microsegmentation, ensuring a vulnerability in one surface cannot traverse to the other. Implement data classification and sensitivity labeling across your AI data stores to support memory integrity controls [P4].

Optimization: Behavioral Analytics, Red Teaming, and Framework Alignment

Mindset: Assume autonomous adversaries. Traditional penetration testing cadences are insufficient when autonomous agents can probe continuously at machine speed. Leverage AI as a defense against AI-augmented attacks.

Action: Implement automated, continuous red teaming against your AI applications and agents. Deploy behavioral analytics [P3] to baseline agent behavior and flag deviations. An agent that has never written to a prompt table and suddenly attempts to do so should trigger an alert before the action executes. Align to the OWASP Agentic Top 10 as your ongoing risk taxonomy. Map your architecture against it quarterly and validate that no risk relies on a single control.

How AHEAD Can Help

AI Governance

Align security efforts to shared AI governance frameworks encompassing ethics, responsible use, and regulatory compliance. We translate policy requirements into AI-contextual standards and operational taxonomies that drive consistent, measurable security outcomes.

Shadow AI Visibility

You can’t secure what you can’t see. We help discover Shadow AI across your environment by leveraging and augmenting existing tools to map the full AI landscape from both coverage and capability perspectives.

AI Security Assessment & Security Tools Rationalization

We conduct MITER ATLAS/SAFE AI and threat modeling to identify control gaps across identity, data access, and ethical use. We also rationalize existing security tools to eliminate overlap, address gaps discovered in the assessments, and provide financial analysis justifying investments for maximum budget efficiency.

Secure AI Architecture

Design and implement a contextual AI security architecture built on existing and new investments and tailored to organization-specific risks. This includes AI gateways, MCP gateways, identity controls, and continuous red teaming capabilities, to name a few.

Validation

Validate the effectiveness of AI security controls through AI-specific penetration testing, establishing a strong foundation for continuous automated red teaming and ongoing assurance.

Final Thoughts

The Lilli breach is a case study in what happens when organizations treat AI platform security as an extension of traditional application security. SQL injection went undetected for over two years in a platform built by one of the world’s most well-resourced organizations because the integration layer, where tools connect to models, prompts govern behavior, and memory accumulates institutional knowledge, was not treated as a first-class security surface.

The five controls presented here are not theoretical abstractions. Applied to Lilli, any single one would have significantly limited the breach. Applied together, the attack chain never gets past step one. But the Lilli scenario, a single autonomous agent attacking a single AI platform, is the simple case. The next frontier is multi-agent systems attacking multi-agent systems: autonomous offensive agents probing architectures where dozens of defensive agents share tools, memory, and execution privileges across organizational boundaries. The OWASP Agentic Top 10 already anticipates this with ASI07 (inter-agent communication), ASI08 (cascading failures), and ASI10 (rogue agents). The organizations that build their security architecture now, before the complexity compounds, will be the ones that can scale agentic AI without scaling their risk surface alongside it.

References

How We Hacked McKinsey’s AI Platform. CodeWall. (2026, March 9). codewall.ai/blog/how-we-hacked-mckinseys-ai-platform

AgenticCyOps: Securing Multi-Agentic AI Integration in Enterprise Cyber Operations. Mitra, S., Patel, R., Mittal, S., Rahman, M. R., & Rahimi, S. (2026). arXiv:2603.09134v1. University of Alabama.

OWASP Top 10 for Agentic Applications 2026. OWASP GenAI Security Project. (2025, December 10). genai.owasp.org

About the author

Felix Vargas

Senior Director, Security Specialist, Solution Engineering

Felix Vargas is a senior leader in AHEAD’s security practice, advising enterprises on modernizing their defenses across cloud, data center and AI-powered environments. He specializes in zero trust architecture, secure-by-design infrastructure and aligning cybersecurity strategy with business outcomes, and frequently works with customers building on NVIDIA technologies. Felix has more than 15 years of experience helping organizations reduce risk while accelerating innovation, and is a regular speaker on emerging threats, AI security, and the future of cyber resilience.

;

; ;

; ;

;